Data harvesting is fundamentally transforming how businesses operate, while simultaneously emerging as a favorite tactic for fraudsters seeking to steal sensitive information. But what exactly is data harvesting? At its core, it’s the systematic process of collecting vast amounts of information from diverse sources, then cleaning and organizing it into an easy-to-use format. While this practice powers legitimate applications in marketing and research, most users remain unaware of the significant risks involved. As data science advances and demand for big data grows, organizations constantly search for innovative ways to access information that provides competitive advantages. Yet the same techniques that fuel business intelligence can also expose individuals to serious privacy violations when personal information, login credentials, and even medical records are harvested without consent.

To understand this digital dilemma, imagine preparing an exquisite all-you-can-eat buffet for valued guests, only to find uninvited “eaters” arriving with massive containers. Instead of dining respectfully, they systematically scoop up the most expensive and unique dishes, leaving empty platters and chaos for genuine customers. This perfectly illustrates what malicious data harvesting bots do to digital assets. Any website hosting valuable data—product catalogs, original content, user reviews, or pricing information—becomes a target. These bots don’t merely visit; they devour resources and steal assets at an industrial scale, disrupting operations and threatening business integrity.

This article explores the complete picture of data harvesting: its essential meaning, how it works behind the scenes, its legitimate applications, and most importantly, the hidden dangers that everyday internet users should recognize but frequently overlook in our increasingly data-driven world.

What Exactly Is Data Harvesting?

Data harvesting (sometimes loosely called large-scale web scraping or crawling) refers to the automated, industrial extraction of massive amounts of information from websites, apps, APIs, or any digital source that is not explicitly designed to provide it neatly.

This is not a human copying and pasting a few paragraphs. It involves millions of automated requests per minute, distributed across thousands of IP addresses, bypassing bot-detection systems, solving CAPTCHAs with AI, and dumping petabytes of text, images, and metadata into cloud storage.

In 2025 the scale is staggering:

- Common Crawl, the largest public dataset, now exceeds 300 petabytes and grows by roughly 100 billion pages per year.

- Private crawls run by major AI labs are estimated to be 5 to 50 times larger.

- A single well-funded operation can harvest the entire public Reddit or English Wikipedia in a matter of hours.

Difference between data harvesting and web scraping

Though frequently used interchangeably, data harvesting and web scraping have subtle distinctions. Web scraping specifically refers to extracting unstructured data from websites using specialized tools or software. It represents one method within the broader data harvesting process.

Conversely, data harvesting encompasses the entire workflow: collecting data from multiple sources, cleaning it, organizing it, and preparing it for analysis. Unlike data mining, which analyzes datasets to discover patterns, data harvesting focuses primarily on the initial collection phase.

Furthermore, data scraping refers to extracting structured data from databases or spreadsheets, whereas web scraping targets unstructured data from websites. This distinction helps businesses recognize when legitimate collection crosses into potentially problematic territory.

Is data harvesting legal?

The legality of data harvesting depends largely on consent, intent, and regulatory compliance. Most data harvesting is perfectly legal, particularly when dealing with publicly available information. Nevertheless, businesses must exercise caution.

Accordingly, regulations like GDPR and CCPA restrict unauthorized data collection, especially concerning personal information. Organizations harvesting data ethically should:

- Collect only publicly available information

- Respect website terms of service

- Avoid harvesting sensitive personal data

- Comply with relevant privacy laws

- Consider copyright implications

- Legal complications arise particularly when harvesting personal information without consent or violating a website’s terms of service.

How data harvesting works behind the scenes

Behind the digital curtain, data harvesting operates through sophisticated technological mechanisms that most users never see. Nearly half of all internet traffic now comes from automated sources rather than humans, with bot-related web traffic reaching unprecedented levels in recent years.

Common tools and technologies used

Data harvesting relies on specialized software designed for automatic extraction. Popular tools like Octoparse, Data Miner, and Web Scraper offer point-and-click interfaces that extract data without requiring coding skills. These applications can navigate websites, fill forms, follow links, and organize the collected information into structured formats like CSV or Excel files.

- Automated bots and scrapers

The explosion of generative AI has created a “gold rush” for data, with companies deploying increasingly aggressive bots to collect content. These automated programs work tirelessly to extract information from websites, often ignoring traditional controls like robots.txt files. More sophisticated bots even mimic human behavior by adding realistic pauses between clicks and scrolling naturally through pages to avoid detection.

- Cookies, trackers, and hidden scripts

Websites track users through various technologies, primarily cookies—small files stored on browsers that remember user activities. First-party cookies track you on the website you’re visiting, while third-party cookies monitor activity across multiple sites. Moreover, browser fingerprinting techniques use your device’s unique configurations to identify you without cookies. These tracking mechanisms collect everything from browsing habits to time spent on pages, creating detailed behavioral profiles.

- APIs and third-party integrations

Application Programming Interfaces (APIs) serve as gateways between applications and data sources. Rather than requiring direct database connections, APIs provide a structured way to retrieve information securely over the internet. Many organizations make their data available through APIs, enabling developers to integrate external information into their applications. Additionally, third-party integrations often operate through embedded scripts that can collect and share user data across multiple platforms.

What is data harvesting used for?

Companies across industries utilize data harvesting for numerous strategic purposes, with each application offering unique benefits alongside potential risks.

- Marketing and advertising

Organizations extract consumer data primarily to understand market trends, track competitor activities, and create personalized marketing experiences. By analyzing harvested information, businesses gain insights into customer behavior, allowing them to develop more targeted advertising campaigns with higher conversion rates. Research shows that companies leveraging customer behavioral insights outperform peers by 85% in sales growth and over 25% in gross margin. Through data mining, marketers segment audiences, build predictive models, optimize campaigns, and deliver real-time personalization based on user context.

- AI training and research

Large language models like GPT-4 depend heavily on harvested data for training, often using publicly available datasets that are inconsistently documented and poorly understood. This lack of transparency creates significant challenges, including potential regulatory compliance issues, copyright risks, exposure of sensitive information, and unintended biases. The Data Provenance Initiative found license miscategorization rates exceeding 50% and license information omission rates of more than 70% in AI training datasets. Consequently, language representation in these datasets skews heavily toward English and Western European languages, potentially leading to bias or underperformance in other regions.

- Lead generation and customer profiling

Data harvesting enables businesses to build detailed customer profiles by integrating digital activities, life events, transaction insights, preferences, and sentiment scoring. Through web scraping, companies automatically gather lead contact information that flows directly into CRM systems. This approach increases sales efficiency since representatives spend less time researching contact details and more time selling. Furthermore, collected data helps businesses personalize outreach, minimize customer churn, optimize pricing, and improve cross-selling strategies.

- Government and policy analysis

Intelligence agencies increasingly purchase and store personal information on citizens with minimal oversight. This commercially available information can reveal sensitive details about personal attributes, private behavior, social connections, and speech patterns. Government agencies navigate laws that typically prevent tracking citizens without court orders or warrants, yet few legal restrictions exist on private companies selling such data.

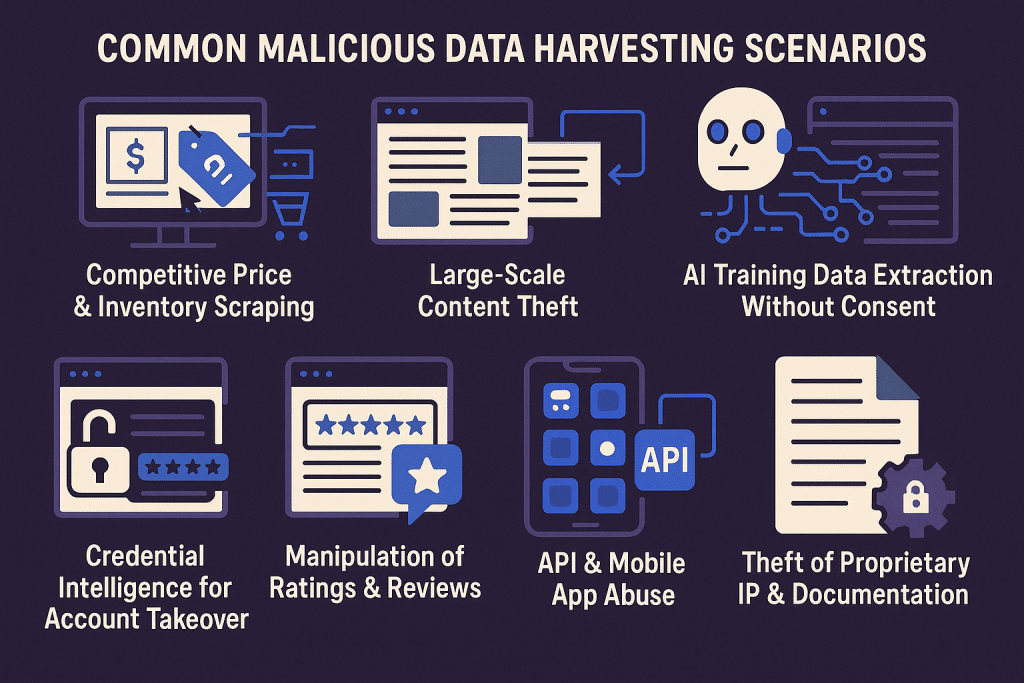

Common Malicious Data Harvesting Scenarios

Although data harvesting can support legitimate analytics, much of today’s large-scale activity is explicitly abusive. Attackers target websites and APIs to steal proprietary data, undermine business models, or gain competitive intelligence. Below are the most common malicious scenarios observed across industries in 2025.

- Competitive Price & Inventory Scraping

E-commerce, travel, and retail platforms face nonstop scraping of prices, stock levels, discount rules, and availability data. Competitors and unauthorized aggregators use this to reverse-engineer pricing strategies, undercut margins, and build shadow comparison sites.

- Large-Scale Content Theft

Publishers, SaaS platforms, blogs, and marketplaces often see their original content scraped and republished across AI content farms, SEO spam networks, and replica websites—hurting search rankings, brand authority, and advertising or subscription revenue.

- AI Training Data Extraction Without Consent

Frontier AI developers and grey-market brokers harvest entire websites, UGC platforms, documentation sites, and media repositories to build training corpora. Once absorbed into model training workflows, removal is nearly impossible.

- Credential Intelligence for Account Takeover

Attackers scrape login pages, user directories, and workflow metadata to automate credential stuffing, username enumeration, and password reset abuse—turning harvested data directly into fraud.

- Manipulation of Ratings & Reviews

Fraud rings scrape legitimate reviews to understand sentiment patterns, then use that data to generate highly convincing fake feedback that influences marketplace rankings or damages competitor reputation.

- API & Mobile App Abuse

Scrapers reverse-engineer API endpoints to steal clean, structured datasets such as product catalogs, flight schedules, hotel availability, logistics data, or proprietary search indexes—often far more efficiently than scraping HTML pages.

- Theft of Proprietary IP & Documentation

B2B platforms face harvesting targeting technical manuals, internal knowledge bases, configuration files, and product architectures—fueling industrial espionage and undermining long-term competitive advantage.

How Data Harvesting Actually Hurts Enterprises in 2025

The real cost of data harvesting in 2025 extends far beyond stolen content or inflated traffic numbers. For modern enterprises, these attacks quietly reshape competitive dynamics, distort critical decision-making, and introduce long-tail risks that compound over time. As automation becomes more sophisticated and AI systems become hungrier for data, organizations across every digital sector now face a new category of operational risk that is both subtle and deeply consequential.

- Erodes Competitive Advantage

When competitors or unauthorized aggregators harvest pricing, inventory, product attributes, or proprietary content at scale, they gain access to insights that should never have left your internal systems. This stolen intelligence allows them to react instantly to market movements, neutralizing strategic advantages you invested years to build. In sectors like travel, retail, and SaaS, even small leaks of real-time data can trigger price undercutting, copycat features, or illicit marketplaces built entirely on your assets.

- Corrupts Analytics and Business Forecasting

Data harvesting bots distort traffic patterns, engagement metrics, and demand signals—polluting the datasets enterprises rely on for forecasting. When bot traffic is mixed with real user behavior, companies misread market appetite, misallocate inventory, or misjudge user intent. Inaccurate analytics ripple across departments, leading to poor product decisions, ineffective marketing spend, and financial waste.

- Drives Up Infrastructure and Operational Costs

Bots are extremely resource-intensive. High-volume harvesting drains bandwidth, spikes API usage, and forces cloud autoscaling systems to expand infrastructure to serve nonhuman traffic. Enterprises unknowingly pay for server capacity consumed by attackers. This results in higher CDN bills, slower response times, and degraded reliability during peak hours—damaging both customer experience and revenue.

- Accelerates Fraud and Abuse

Harvested data is rarely the end goal—it’s the fuel. Attackers feed scraped user identifiers, behavioral metadata, and login endpoints into automated pipelines for credential stuffing, account takeover, fake account creation, and payment fraud. The downstream damage is immense: increased chargebacks, customer complaints, support overhead, fraud investigations, and platform abuse that strains internal teams.

- Exposes Enterprises to Regulatory and Privacy Risks

Under GDPR, CCPA, and emerging global privacy laws, organizations are responsible for protecting both public-facing and internal user data from mass extraction. Large-scale harvesting of personal information—emails, identifiers, interaction logs—can trigger regulatory scrutiny, legal liability, and reputational fallout. Even if the information was technically “public,” regulators view unchecked mass scraping as a failure of data stewardship.

- Damages Search Visibility and Brand Integrity

When harvested content appears across AI-generated spam sites, unauthorized databases, or low-quality aggregators, enterprises lose control of their brand narrative. Search engines penalize duplicate content, degrading organic ranking and siphoning traffic to impostor websites. Meanwhile, customers encountering stolen or misrepresented data may no longer trust the authenticity of the brand.

- Degrades User Experience and Customer Trust

Bots slow websites, trigger false positives in security systems, and force companies to add stricter verification steps that inconvenience legitimate customers. When users experience repeated timeouts, CAPTCHA interruptions, or unreliable availability, their trust erodes. In competitive markets, even small UX disruptions translate into abandoned carts, lost bookings, and declining loyalty.

- Evades Traditional Defenses, Creating a False Sense of Security

In 2025, modern harvesting operations are powered by residential proxy networks, browser emulation, AI-driven behavioral mimicry, and distributed infrastructures that look indistinguishable from real users. Legacy defenses—simple rate limits, IP blocks, or static CAPTCHAs—are no longer adequate. Many enterprises remain unaware of the scale of harvesting occurring on their platforms until financial or operational damage becomes undeniable.

How to Stop Malicious Data Harvesting (Strategies That Still Work in 2025)

To effectively defeat the modern, AI-powered harvesting stack, enterprises must evolve their defenses from simple perimeter checks to deep, continuous behavioral analysis. Protection in 2025 demands a multi-layered approach that starts with hygiene and escalates to advanced detection.

1. Basic Hygiene (Necessary but No Longer Sufficient)

These are the foundational security measures every organization must implement, even though they are easily bypassed by determined, sophisticated harvesters using residential proxies and botnets.

- Aggressive Rate Limiting + IP Reputation Lists: This filters out low-effort, volume-based attacks and known suspicious IP ranges, VPNs, and Tor exit nodes.

- Web Application Firewall (WAF) Rules: Excellent for blocking known attack patterns (like SQL injection). Against harvesting, WAFs provide basic defense by filtering malicious payloads but struggle when bots mimic normal browser traffic.

- Polite robots.txt (Mostly Theater at This Point): While necessary for ethical crawlers, malicious harvesters simply ignore these instructions, viewing the file as a target map rather than a security mechanism.

2. Modern Defenses That Actually Move the Needle

To truly stop persistent data harvesters, the focus must shift to the device and behavioral layer, introducing complexity that significantly raises the cost for attackers.

- Advanced Browser and Device Fingerprinting: Go beyond simple headers. This involves collecting unique, hard-to-fake static variables that help identify inconsistencies, such as Canvas and WebGL rendering, Audio Context, and Font Enumeration.

- Integrity Checks for Headless Environments: Actively look for the artifacts, default settings, and scripting traces left behind by automated browser frameworks like Playwright or Puppeteer, which are commonly used by advanced harvesting bots.

Why Traditional CAPTCHAs Are Dead Against Serious Harvesting in 2025:

Instead of dedicating a separate section, the limitations of traditional CAPTCHAs are naturally reflected here: image and audio challenges are now solved instantly by vision and speech models, and bots can emulate human pointer movements with high fidelity. As a result, traditional challenge-response adds friction for real users while offering minimal deterrence against modern harvesting pipelines.

3. Behavior-Driven Bot Detection: The Last Layer That Still Works

The real breakthrough in 2025 is behavioral intelligence. Instead of accepting static indicators such as IP or User-Agent at face value, leading systems evaluate how a visitor actually behaves over time.

This model relies on thousands of micro-behaviors that are extremely difficult for automation to reproduce consistently. It evaluates interaction quality, timing patterns, dynamic friction responses, and the fluidity of human input across the session. When combined with lightweight verification, this creates a flexible and adaptive signal that remains robust even when attackers change infrastructure, scripts, or browser environments.

Platforms such as GeeTest apply this exact philosophy, using behavior-driven analysis and dynamic interaction models to neutralize harvesting attempts long before data can be extracted at scale. Integration is typically seamless because the system evaluates intent rather than relying on intrusive challenges.

Conclusion

Data harvesting is a double-edged sword: an essential engine for legitimate AI and market innovation, but also a massive vulnerability leveraged by fraudsters and competitors. This industrial-scale extraction is actively eroding competitive advantage for businesses, corrupting core analytics, and increasing operational costs. Simultaneously, it poses severe risks to individual privacy, fueling scams and the unregulated sale of personal data.

Enterprises that adopt modern, behavior-driven defenses will be far better equipped to maintain the integrity of their data, preserve user trust, and make clear, accurate decisions in an environment shaped by automation. By strengthening visibility into real user intent and reducing the noise created by harvesting bots, organizations can elevate both security and performance, ensuring their digital operations remain resilient as data-driven technologies continue to evolve.